I will list some important literatures about the topic of learning general policies through sketches

The research is initiated by Blai Bonet and Hector Geffner

The high level goal of the research is as follows

[!IMPORTANT]

The construction of reusable knowledge (transfer learning) is a central concern in (deep) reinforcement learning, but the semantic and conceptual gap between the low level techniques that are used, and the high-level representations that are required, is too large.

For addressing this challenge, new ideas and methods are required that build on those of planning, deep and reinforcement learning, logic and knowledge representation, and combinatorial optimization. Our approach is a form of top-down representation learning based on a clear separation and characterization of what is to be learned from how (Video). The research methodology that is common in deep learning, which focuses mainly on relative performance is not good enough as it does not give us a crisp understanding. At the same time, we cannot afford not to use deep learning, namely, the optimization of parametric functions (neural nets) by means of stochastic gradient descent, because deep learning represents a very useful, versatile, and effective class of solvers.

An intro: ICAPS 2024 Keynote: Hector Geffner on “Learning Representations to Act and Plan”

Learning Sketch Decompositions in Planning via Deep Reinforcement Learning

https://arxiv.org/abs/2412.08574

Conclusion quote: We have demonstrated that DRL methods can effectively learn the common subgoal structures of entire collections of planning problems, enabling them to be solved efficiently through greedy sequences of IW(k) searches.

Symmetries and Expressive Requirements for Learning General Policies

https://arxiv.org/pdf/2409.15892

Abstract: State symmetries play an important role in planning and generalized planning. In the first case, state symmetries can be used to reduce the size of the search; in the second, to reduce the size of the training set. In the case of general planning, however, it is also critical to distinguish non-symmetric states, i.e., states that represent non-isomorphic relational structures. However, while the language of first-order logic distinguishes non-symmetric states, the languages and architectures used to represent and learn general policies do not. In particular, recent approaches for learning general policies use state features derived from description logics or learned via graph neural networks (GNNs) that are known to be limited by the expressive power of C2, first-order logic with two variables and counting. In this work, we address the problem of detecting symmetries in planning and generalized planning and use the results to assess the expressive requirements for learning general policies over various planning domains. For this, we map planning states to plain graphs, run off-the-shelf algorithms to determine whether two states are isomorphic with respect to the goal, and run coloring algorithms to determine if C2 features computed logically or via GNNs distinguish non-isomorphic states. Symmetry detection results in more effective learning, while the failure to detect non-symmetries prevents general policies from being learned at all in certain domains

On Policy Reuse: An Expressive Language for Representing and Executing General Policies that Call Other Policies

https://openreview.net/forum?id=TSl0tWPiXT

https://ojs.aaai.org/index.php/ICAPS/article/view/31458/33618

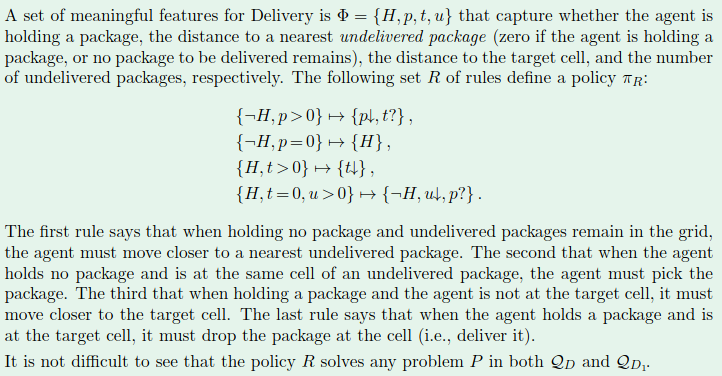

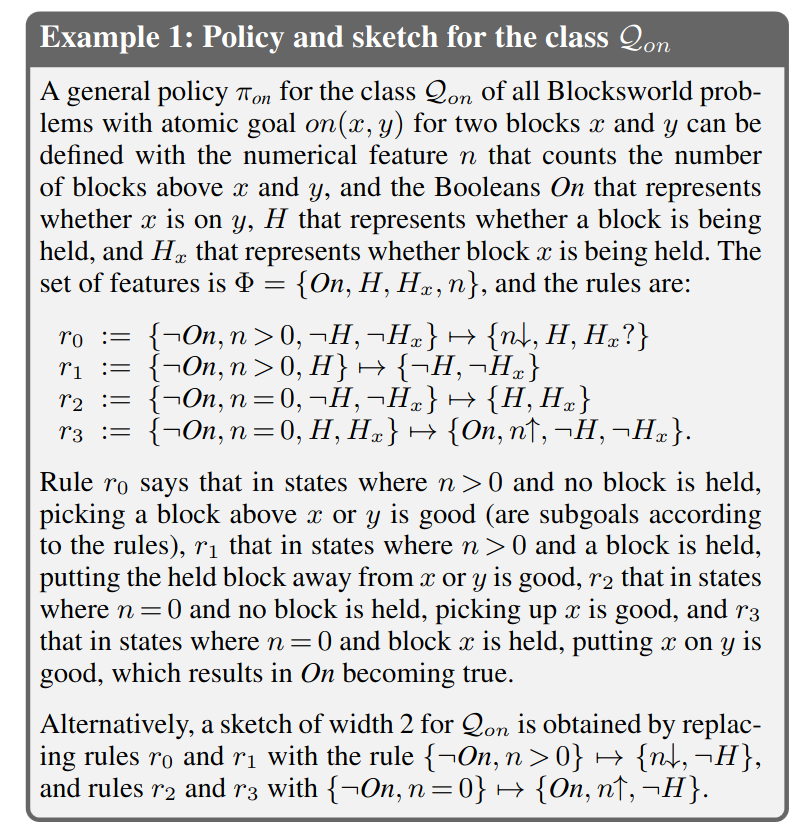

It provides a types of language to express the general policies (which is a collection of rules)

Learning General Policies with Policy Gradient Methods

https://proceedings.kr.org/2023/63/kr2023-0063-stahlberg-et-al.pdf

The learning of general policy is different from standard policy gradient learning as the general policy has different semantic than the standard policy

The long dicussion of the search: https://arxiv.org/pdf/2311.05490